How Universities Detect AI in Dissertations – And How You Actually Stay Compliant in 2026

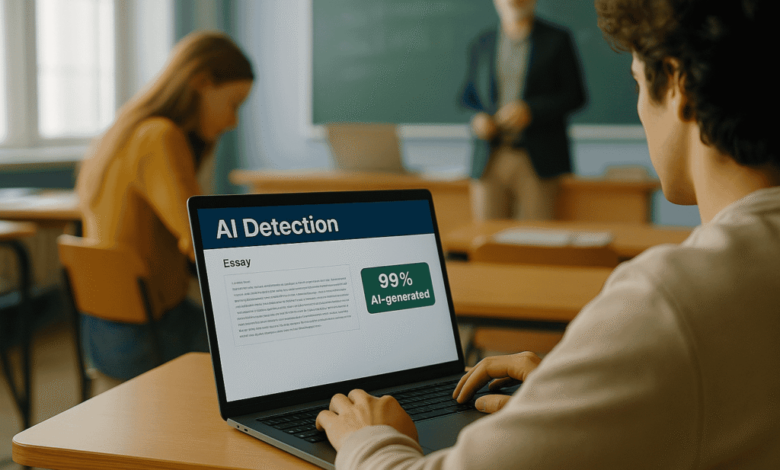

Turnitin just released fresh numbers in February 2026: 14.8% of English-language submissions now show 80% or more AI-generated text. That’s up from roughly 3% when their first detector launched back in 2023. For dissertation writers, the message is blunt. One heavy AI draft and your entire project can land under a microscope.

We’ve worked with enough graduate students to see the pattern. The detectors aren’t magic, but the combination of software flags plus old-fashioned professor instincts is getting sharper. The good news is compliance isn’t about swearing off every tool. It’s about understanding exactly what raises red flags and building work that holds up under real scrutiny.

What the Detectors Actually Measure in 2026

Most universities still run Turnitin, Copyleaks, or GPTZero as the first pass. These tools don’t “read” your argument. They score predictability (how formulaic each sentence feels), burstiness (the natural swing between short punchy sentences and longer ones), and overall rhythm. Pure AI output tends to land too even, too polished, too predictable.

Turnitin itself now admits scores are indicators only, not proof. Many departments have already pulled back after seeing false positives hit non-native speakers hardest. Independent studies from 2025-2026 put real-world accuracy on edited text at 60-85% at best. Several big schools (Waterloo, Curtin, and a growing list of others) have quietly disabled the AI detection layer entirely because the noise outweighed the signal.

The software is just the opening act.

What Professors Actually Look For Beyond the Score

Faculty know your voice from seminars, earlier papers, and discussion boards. A sudden jump to flawless academic prose is the fastest way to start a conversation. They also check citation depth (AI still invents sources or cites papers that don’t exist), logical gaps in your synthesis, and whether you can defend every claim in a viva without notes.

The bigger shift in 2026 is “proof of process.” Departments now routinely ask for draft histories, reflection logs, prompt records if you used any AI, or even recorded office-hour discussions. Policies at places like NYU, Cornell, and Pitt make it explicit: a detector score alone won’t trigger misconduct charges. They want evidence you did the thinking.

That change matters. It moves the game from “beat the machine” to “show your work like a real researcher.”

The Real Risk with Dissertation AI Tools

Full-section Dissertation AI generators promise a complete chapter in minutes. The output often passes a quick read because it sounds academic. What it can’t hide is the lack of personal stake or original synthesis. Once flagged, the burden lands on you to prove ownership. We’ve watched strong students burn weeks rebuilding trust instead of finishing their defense prep.

The tools aren’t evil. The problem is treating them as a ghostwriter instead of a brainstorming partner. Heavy human rewriting can fix the fingerprint, but most people skip the hard part and get caught.

Practical Ways to Stay Compliant Without Losing Your Mind

Start with your department handbook and syllabus. Policies vary wildly in 2026. Some programs treat AI like a calculator for outlines and grammar. Others demand full disclosure in the methods section.

Document everything. Keep version histories, notes on how you developed ideas, and prompt logs if you used any assistance. When policy allows limited AI use, state it clearly and show the revisions.

Focus your own effort where AI still falls flat: original cross-source synthesis, nuanced critique, and the ability to stand in front of your committee and own every argument. Those are the parts that make the work yours.

If deadlines are piling up and you need human-quality output that actually passes every check, professional academic writers can close the gap cleanly. Services like Hire Book Writers deliver original, voice-driven work because it’s written by experienced humans from the start. No detector fingerprint, no policy gray areas, just compliant content that reflects real research effort.

When a Literature Review Tool Becomes the Only Option

Literature reviews are the section that breaks most people. A literature review generator can spit out organized paragraphs and source lists fast. The trap is the flat, citation-light style that detectors now spot instantly.

Used right, it’s a starting scaffold. Used as-is, it reads like an algorithm output instead of a researcher who actually wrestled with the field. The fix is brutal but simple: rewrite every paragraph in your own voice, add the critical connections only you see, and verify every single reference against the original text. Skip that, and you’re handing your committee the exact pattern they’re trained to notice.

2026 Dissertation Compliance Checklist (Your Linkable Asset)

Here’s the short, practical list I give every client before they submit:

- Check current department AI policy and note allowed uses

- Keep full draft history and reflection notes

- Verify every citation against the original source

- Read the full draft aloud – does it sound like you?

- Prepare to explain every major claim without notes

- Disclose AI assistance exactly where required

- Run a final self-check for uniform sentence rhythm

Save it, share it, bookmark it. This is the kind of simple tool that actually gets referenced.

Bottom Line

Detection tech will keep evolving, but the universities that matter are moving past the arms race. The strongest dissertations in 2026 come from students who treat AI as a drafting aid, revise ruthlessly, cite transparently, and keep their own analysis front and center.

Do that, and you don’t just dodge penalties, you build work that you can actually defend. That’s the only compliance strategy worth carrying past graduation.